At this point, you’ve surely heard of Generative AI, thanks to ChatGPT’s spectacular ascendancy over the last year. Everyone seems to be using it. Maybe you’re adopted it at work to make certain tasks more efficient. Your kids might be using it in school (for better or for worse). Companies are increasingly highlighting its role in marketing campaigns. Just the other day, I asked it to generate a piece of code that reduced an hour-long spreadsheet task to just five minutes.

Even if you’ve never entered a single prompt into ChatGPT, chances are you’re already regularly interacting with the technology. Large language models—the technology behind ChatGPT and its competitors—are used to power chatbots and automate phone responses. The medical industry has adopted Generative AI (GenAI) to summarize doctor visit notes, help diagnose illnesses with greater accuracy, and develop new life-saving medications. Real-time translation apps are also continually improving thanks to this advancing technology.

It seems as if new uses spawn almost daily, and while conversations around AI and data privacy have been ongoing for years, they have increased in intensity as new generative applications are introduced, and existing tools, like ChatGPT, work through growing pains. How are we supposed to keep up with privacy concerns and potential risks when things change so rapidly?

Generative AI and Training Data

Understanding how GenAI works is a good first step. Very simply, generative AI tools are massive approximators. Algorithms are trained by absorbing an immense amount of speech, writing, and images, from all kinds of sources; then, when prompted, they are able to generate something like an average human response. The training data is key. But where does it come from?

As an experiment, let’s see what ChatGPT can tell us:

IA: “Hello ChatGPT. I am writing an article on generative AI and its associated privacy concerns. Could you tell me, in a paragraph or two, about the datasets that models like yourself are trained on?”

ChatGPT: “Generative AI tools are trained on extensive and diverse datasets that encompass a wide range of sources and linguistic styles. These datasets typically include text from sources such as books, articles, websites, forums, and conversational logs. The aim is to expose the model to a broad spectrum of language patterns, contexts, and cultural nuances. Internet text, including content from social media and online forums, allows the model to understand and generate language as it is commonly used on the web. Ethical considerations in sourcing and curating these datasets are crucial to address potential biases and ensure responsible AI development. Despite efforts to maintain diversity and fairness, challenges persist in mitigating biases inherited from the training data, raising important privacy concerns regarding the potential for inadvertently encoding sensitive or personally identifiable information in generated outputs.”

Did ChatGPT just beat me to my own point? It appears (at least, according to an approximation of the average human response to this prompt) that sensitive or personally identifiable information can be inadvertently included in training data. While it is known that copyrighted materials are not excluded from large language models, according to OpenAI—the company behind chatGPT—neither is personal information, though it “doesn’t actively seek [it] out.”

Great! But let’s not forget that you may have consented to share your data without your awareness. “Many companies’ privacy policies, which are updated and changed all the time, may let them do exactly that,” writes Sara Morrison for Vox. “They often say something along the lines of how your data may be used to improve their existing products or develop new ones. Conceivably, that includes generative AI systems.”

So personal information can make its way into training datasets. What does this mean?

Implications on Security and Data Privacy

Despite safeguards, your data may still slip out given the right prompt. In one curious glitch this past November, ChatGPT seemed to reveal sensitive information when prompted to repeat “poem” or “book” forever. In another example, hacker-researchers at Indiana University were able to extract personal email addresses of over 30 New York Times employees from ChatGPT.

The Indiana University experiment brings to light another concerning trend, that ChatGPT allows scammers and other ne’er-do-wells to increase the quality and frequency of phishing attacks. “Since the fourth quarter of 2022, there’s been a 1,265% increase in malicious phishing emails, and a 967% rise in credential phishing in particular,” writes Bob Violino for CNBC, referencing a report by cybersecurity firm SlashNext. ChatGPT also allows for a level of personalization previously unreachable. For example, “a cybercriminal might use AI to generate an email to a specific employee, posing as the individual’s boss or supervisor and referencing a company event or a relevant personal detail, making the email seem authentic and trustworthy.”

I’ve seen these emails myself. They are very convincing. Phishing is a numbers game: the higher the total output, the greater the chances that someone—even if it is just one person out of 10,000—will make a mistake and click the link. The heightened sophistication of Gen AI-fueled attacks lowers that denominator.

Ok. I’ve sufficiently scared myself. With all that being said, how can we protect ourselves and our data while still taking advantage of all of the benefits of these tools?

User Literacy and Best Practices

Let’s see if ChatGPT can give us any advice.

IA: “Hi again, ChatGPT. I’m chugging along on my article, and I’ve arrived at the part where I would like to offer the reader some best practices for using Generative AI tools while safeguarding their personal information and privacy. Could you write a few bullet points with your best advice on the topic?”

ChatGPT: “Certainly! Here are some best practices for using Generative AI tools while maintaining data security and privacy:”

- Educate Yourself: Take some time to understand how generative AI works and its potential privacy implications. This knowledge empowers you to use the tool responsibly and make informed decisions.

- Mindful Input: Before using generative AI, review and remove any personal or sensitive details from your input. This ensures that the generated content doesn’t accidentally reveal private information.

- Regular Updates: Keep the generative AI tool and its software updated. Updates often include improvements in security and privacy features, reducing the risk of potential issues.

- Privacy-Focused Choices: Opt for tools that prioritize user privacy. Look for features that allow you to control and manage your data, and choose tools from reputable developers with a commitment to privacy.

By following these tips, individuals may enjoy using generative AI tools while safeguarding their privacy and being mindful of potential risks.”

There are some good points here, but this isn’t everything. Educating yourself on how the tools work—as ChatGPT says—is a good place to start for the personal user, and being mindful about what you enter into the chats is extremely important. (I would say ChatGPT gets the second part of the second bullet wrong—this helps prevent the tool from revealing the information you just entered to someone else, sometime in the future, given the right set of prompts.) Keeping the tool updated and opting for additional privacy features, such as turning off your chat history, are also fairly simple things we can all do to safeguard our personal information.

But that’s not enough. If you or your colleagues use ChatGPT at work, there should be a set of guidelines and best practices in place. Trainings, according to tech writer Rachel Curry, are instrumental in teaching users to “adopt AI responsibly, from governing large language models to prevent data leaks, to avoiding non-compliance.” One such course, from training provider BigID, “suggests setting automatic flags for policy violations when sensitive or regulated data ends up in the wrong place. These can include existing policies but should also include new policies that manage and monitor potential risks specifically associated with generative AI.”

As for phishing attacks, it is important—both personally and at your workplace—to remain constantly vigilant. This means regularly reminding yourself of the existence of this kind of threat, and making ongoing training at the organizational level a standard. “Organizations need to conduct regular testing and security audits of systems that can be exploited,” writes Violino. “Another good practice is to implement email filtering tools that use machine learning and AI to detect and block phishing emails.” Companies can also conduct their own phishing simulations to help train their employees on what to look for.

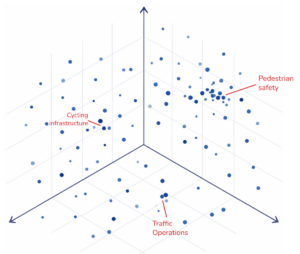

Legislative, Tech, Large Scale Approaches

We can also look bigger—to regulatory bodies at all levels, and to the tech companies themselves—for solutions. Entities as large as the European Union have come out with regulations on AI, while countries across the world have taken their own ad-hoc approaches. Italy, for example, temporarily restricted the use of ChatGPT in April 2023 for data privacy reasons before rolling back the sanction about a month later. Around the same time, Samsung made headlines by banning ChatGPT after a large data breach, when unsuspecting engineers fed proprietary code into the model for speedy improvements. Unlike their Italian counterparts, Samsung still maintains its ban. But as Joe Payne, the CEO of risk software Code42 says, “Specifically, banning ChatGPT might feel like a good response, but it will not solve the larger problem. ChatGPT is just one of many generative AI tools that will be introduced to the workplace in the coming years.”

Clearly, a more holistic approach is necessary. Local, state, and federal governments can take greater measures to regulate the kinds of information used in training datasets and limit personally sensitive details from escaping as output. This past summer, the European Union released the AI Act, regulating the use of Generative AI. A couple months later, the Biden administration announced a sweeping executive order with similar intentions. Other tech solutions, such as data privacy vaults, seek to identify and depersonalize sensitive information before it can even enter the algorithms, such as in PrivateGPT.

As GenAI technology becomes more ubiquitous, we should, ideally, be able to stick to personal and organizational best practices, remain diligent, and trust that our local and national leaders have our backs. After all, this reflects the Adams administration’s NYC AI Action Plan.

“While artificial intelligence presents a once-in-a-generation opportunity to more effectively deliver for New Yorkers, we must be clear-eyed about the potential pitfalls and associated risks these technologies present,” said Mayor Adams, at the press conference following the Action Plan’s launch, “I am proud to introduce a plan that will strike a critical balance in the global AI conversation—one that will empower city agencies to deploy technologies that can improve lives while protecting against those that can do harm.”

As we continue to interrogate artificial intelligence and its growing influence on our day-to-day lives, we must strike a balance between progress and responsibility.

How exactly should we strike that balance? There’s no easy answer. But for a place to start, you could always ask ChatGPT.